The Project has 2 phases Website Crawler and Keywords Search. The project uses Technology java, python, Web-Server, Machine Learning, Artificial Intelligence (AI), Natural language processing (NLP) and Natural language generation (NLG).

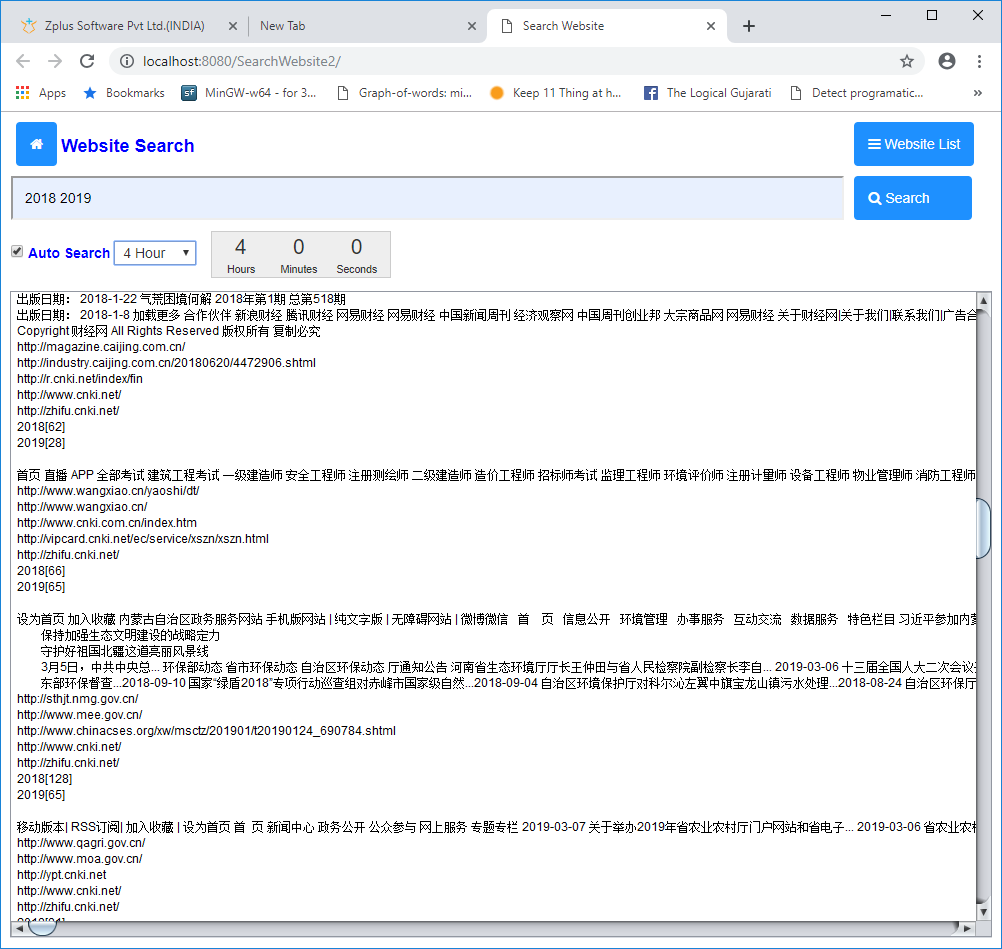

The output shown in dashboard and consist of 3 parts using NLP & NLG location-wise.

(1) The paragraphs containing keywords that we searched for both original text and English translation

(2) Tree of websites traversed showing links as depth

(3) Frequency statistics of keywords.

We tested project on websites that's are

http://zhifu.cnki.net

http://xb.81.cn

http://db.81.cn

http://nb.81.cn

http://www.haohanfw.com

http://bbs.tiexue.net

http://www.360doc.com

http://blog.sina.com.cn

http://military.cnr.cn

https://www.weibo.com

https://mil.sina.cn

https://lt.cjdby.net

Web scraping where more than 1000+ website is downloading using Httrack. Whole internal website and External 4 levels of depth. The user has an option of directing the program to crawl sites at a stipulated time like say every 2 3 6 12 hours or one day.

keyword searching using elastic Search from crawled data which might be in any language - Chinese, Arabic, Urdu, English, etc. We have tested project on crawled websites where data is more than 50 GB and Searching Speed is 1-2 Second.